As you have probably noticed, my reviews are free of advertising. Instead of distracting you with annoying ads, I kindly request your donation. If you find the contents of this page to be useful, please consider making a donation by clicking the Donate button below.

Sjöström QRV08 Headphone Amp

I had the opportunity to borrow a Sjöström QRV08 current feedback headphone amp and run it through its paces on the test bench.

Overall, this particular build is quite nice. It appears to be built according to instructions with one modification: The PCB mounted E-I core transformers used in the original build have been replaced with toroidal transformers connecting to the PCB with short leads. The volume control is a 24-step Elma attenuator. The internal wiring is nicely done. The wires are kept short and shielded cable is used for the connections to the input RCA jacks. Very nice.

Summary of Key Performance Parameters

The key performance parameters for the QRV08 are tabulated below.

| Parameter | Value | Notes |

|---|---|---|

| Output Power | 140 mW | 20 Ω, THD+N < 0.005 % |

| Output Power | 200 mW | 32 Ω, THD+N < 0.005 % |

| Output Power | 225 mW | 300 Ω, THD+N < 0.005 % |

| THD | 0.00062 % | 1 kHz, 200 mW, 300 Ω |

| THD | 0.00042 % | 1 kHz, 200 mW, 32 Ω |

| THD+N | 0.0015 % | 1 kHz, 200 mW, 300 Ω |

| IMD: SMPTE 60 Hz + 7 kHz @ 4:1 | 0.0023 % | 200 mW, 32 Ω |

| IMD: DFD 18 kHz + 19 kHz @ 1:1 | 0.0012 % | 200 mW, 32 Ω |

| IMD: SMPTE 60 Hz + 7 kHz @ 4:1 | 0.0060 % | 200 mW, 300 Ω |

| IMD: DFD 18 kHz + 19 kHz @ 1:1 | 0.0012 % | 200 mW, 300 Ω |

| Multi-Tone IMD Residual | < -106 dBV | AP 32-tone, 200 mW, 300 Ω |

| Channel Separation | 90 dB | 1 kHz |

| Channel Separation | > 85 dB | 20 Hz - 20 kHz |

| Gain | 12.7 dB | 1 kHz |

| Gain Variation | ±3 dB | 20 Hz - 20 kHz |

| Input Sensitivity | 1.80 RMS | 200 mW, 300 Ω |

| Bandwidth | 20 Hz - 1.5 MHz | |

| Full-Power Bandwidth | 1.15 MHz | |

| Slew Rate | 80 V/µs | 300 Ω || 220 pF load |

| Total Integrated Noise and Residual Mains Hum | 3.8 µV RMS | 20 Hz - 20 kHz, A-weighted, min. volume |

| Total Integrated Noise and Residual Mains Hum | 6.5 µV RMS | 20 Hz - 20 kHz, Unweighted, min. volume |

| Residual Mains Hum | < -124 dBV | |

| Dynamic Range (AES17) | 120 dB | 1 kHz |

| All parameters measured at the maximum setting of the volume control unless otherwise noted. | ||

For a stand-alone headphone amplifier, this amp has surprisingly low output power. Granted, it drives my Sennheiser HD-650 just fine, but they're also very easy to drive. This amp is likely to run out of steam when driving planar headphones, such as the HIFIMAN HE6. The measurement below shows the THD+N vs output voltage swing for the QRV08. At the onset of clipping, the output voltage swing is 11.6 V peak (8.2 V RMS). The peak output current at the onset of clipping is 120 mA into a 20 Ω load, resulting in 140 mW of output power. The output power of this amp is limited by the output current drive capability for impedances below 100 Ω and voltage limited for impedances above 100 Ω.

The THD+N vs output power is shown for 300 Ω below. The amp exhibits a fair amount of 1/f noise, hence, the THD+N measurement is a bit noisy at the low power levels. The amp starts clipping at about 225 mW with a 300 Ω load. The two channels show close to identical performance.

Note Ch1 = Left channel; Ch2 = Right channel.

Repeating this measurement for 32 Ω load yields the result shown below. The amp shows nearly identical performance for the left and right channel up to 200 mW, where the left channel clips a bit earlier than the right.

The THD+N vs frequency profile of this amp, as shown below, is odd to say the least. The hump at low frequency is rather unusual. The sudden drop in THD+N at 12 kHz is caused by the 6th harmonic falling outside the measurement bandwidth.

At 32 Ω, the THD+N looks closer to what I would have expected. Reasonably flat up to some frequency where the amp runs out of loop gain and the THD starts to rise. Note that even with 32 Ω load, there is still some of the low frequency THD hump left. Above 1 kHz, the left channel distorts more than the right channel. As the amp is a dual mono construction, this difference is likely due to part-to-part variation between the two channels.

The THD was further characterized using a precision 1 kHz oscillator. As the frequency spectrum below shows, the amp creates many high-order THD components. The THD measures 0.00062 %.

Interestingly, the THD actually improves under heavier load. The graph below shows the THD of the QRV08 when driving a 32 Ω load. The THD comes in at 0.00042 %

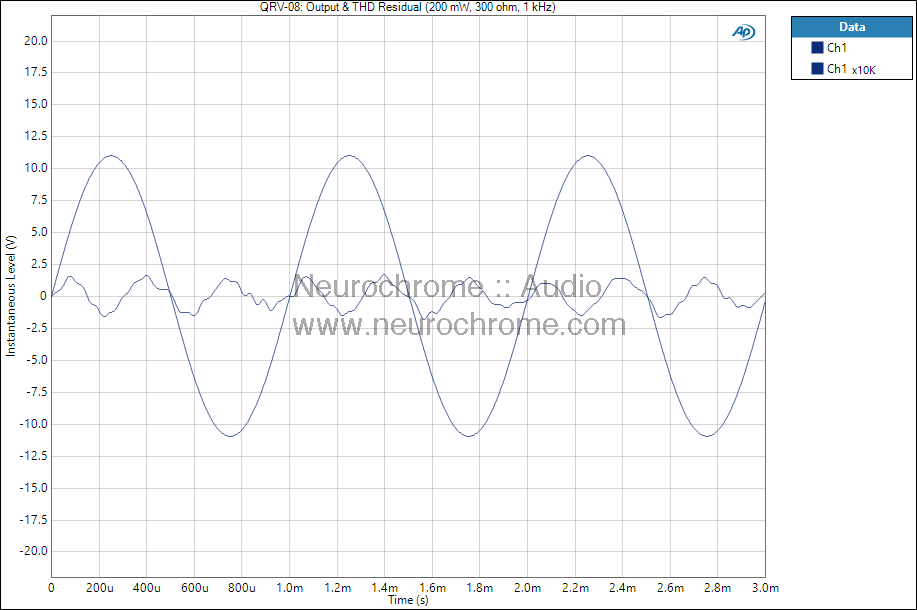

Another way of showing the THD is to look at the output signal as well as the THD residual. This measurement is shown below. Note that the THD residual was amplified by 80 dB (×10k).

The intermodulation distortion (IMD) is pretty decent, though, the SMPTE IMD performance with 300 Ω load is considerably worse (0.0060 %) than with 32 Ω load (0.0023 %). The IMD for 300 Ω load is shown below.

With the 60 Hz frequency component in the test signal, the SMPTE test is useful for finding power supply issues and thermal issues. Thus, I find it counterintuitive that the performance at 300 Ω (hardly any load on the power supply and not much power dissipated in the output stage) is worse than the performance at 32 Ω, where both the power supply and the amp output stage get a workout. I am not implying that there is an issue here, I am merely pointing out an unexpected result. The result of the SMPTE IMD test is shown below.

The 18+19 kHz (1:1) IMD test is useful for estimating the available loop gain near the end of the audible spectrum, thus a good indicator of quality circuit design and layout. The QRV08 shows pretty decent performance here. The IMD (18+19 kHz @ 1:1) for 300 Ω load is shown below. The IMD measures 0.0012 %.

With 32 Ω load, the distribution of the IMD products change (interesting!), but the total IMD remains 0.0012 %.

The multi-tone IMD test uses a test signal with 32 logarithmically spaced tones. This test signal sounds a lot like an out-of-tune pipe organ and is a reasonable representation of a typical music signal, thus this test provides a good measure for how the amplifier behaves when reproducing music. It is also a very challenging test for the amplifier, thus, provides reliable discrimination of the good amplifiers versus the truly great amplifiers. The peak amplitude of the test signal is adjusted to just below clipping, resulting in 200 mW into 300 Ω. The amp reproduces this signal just fine, though, it is interesting how many IMD components creep up. They're all quite far down, though.

The observant reader will notice that the lower frequency tones in the plot above have slightly lower amplitude than the rest. This is due to the gain rolloff of the amp. The frequency response of the QRV08 is shown below. The midband gain is 12.7 dB and the -3 dB frequency is 20 Hz.

The channel separation of the QRV08 is quite good. It measures a hair above 90 dB midband, degrading to just shy of 85 dB at 20 kHz. The dual mono construction helps out here. The measurement is shown below.

Finally, the residual mains hum and noise is shown below. Note the mains components exceed the noise floor up to nearly 4 kHz, which is surprising given that the design includes four "Extremely High Speed Super Regulators". Also note the 1/f slope to the noise floor up to 500 Hz. The residual mains hum + noise measures 6.5 µV RMS (unweighted, integrated within 20 Hz - 20 kHz).

The QRV08 has very high slew rate (80 V/µs) and high bandwidth (1.5 MHz), thus, stability with capacitive loads can be an issue. I tested load capacitances in the range of 47 pF to 2.2 nF in parallel with a 300 Ω resistive load. At 470 pF || 300 Ω some signs of degradation of the edges on the transient response can be seen. At 1 nF || 300 Ω, slight overshoot develops, and at 2.2 nF || 300 Ω, the amplifier will oscillate. This is to be expected of a fast amplifier like that. a typical headphone cable has a capacitance around 33 pF/m, thus, the QRV08 should be able to drive more than 10-20 meter of cable. That should be more than enough for everybody.

The transient response for a purely resistive load is shown below. It's nice and clean with sharp edges.

As mentioned above, with 1 nF added in parallel with the 300 Ω load, a slight overshoot and some ringing develops. This is to be expected for a high-speed amplifier.

Listening Impressions

The QRV08 is quite pleasing to listen to on most music genres. The bass is quite precise but sounds slightly boosted or warm to me. Perhaps the unusual THD+N profile comes into play here providing a bit of harmonic extension on the low frequencies. I find the highs to be slightly muddy, albeit, only slightly. There's a slight haze, similar to what I hear on most Schiit gear. This is noticeable on good recordings, such as Dire Straits Brothers in Arms, and in particular when Celine Dion really lets loose on Falling Into You. That said, I can easily be accused of bias as I do offer a competing - and in my view better - product for sale. Most will likely enjoy the QRV08 but discerning listeners and those with more demanding headphones than my Sennheiser HD-650 will likely yearn for more.

Conclusion

Overall the QRV08 meets my expectations for a mid-to-high end headphone amplifier. It provides quite nice performance, in particular considering that it is a discrete design. It will provide enough output power to power most dynamic headphones, though, with the 120 mA / 11.6 V peak limit, those with high-end phones, such as HIFIMAN HE6, Audeze LCD2, etc. will likely want more output power.

The -3 dB frequency of 20 Hz is rather high. I prefer a cutoff below 2 Hz to avoid excessive gain and phase variation within the audio band. This should be possible to address by changing the input AC coupling capacitor, assuming there is enough room on the PCB for a larger capacitor.

I am intrigued by the peculiar THD+N vs frequency profile of this amplifier. The fact that the THD+N vs frequency improves with a heavier load impedance indicates to me that the output stage of the QRV08 may be biased a bit too lightly.

Please Donate!

Did you find this content useful? If so, please consider making a donation by clicking the Donate button below.